Template OCR Is Dead: How AI Document Extraction APIs Cut Dev Time by 80%

Your CTO walks into the standup. “Invoice processing MVP ships next quarter,” he says. “Should be straightforward just OCR and some regex”. It sounds like a standard two-sprint epic.

Fast forward six months. Three of your best backend engineers are still debugging coordinate drift on scanned PDFs. The highly tuned regex for line items just broke again because Vendor #47 decided to move their SKU column exactly 12 pixels to the right. Your carefully crafted bounding box detection fails completely on any document scanned below 300 DPI. To make matters worse, the intern who originally wrote the Tesseract configuration left for a crypto startup, leaving behind a codebase nobody understands.

Sound painfully familiar? You are not alone. According to a recent technology architecture study by Gartner, over 65% of enterprise software teams severely underestimate the technical debt associated with building internal data extraction pipelines.

The truth is, template-based OCR isn’t just slow it’s a massive technical liability. Every new document format your business encounters requires another fragile if/else branch, another brittle regular expression, and another sprint burned entirely on edge cases. Meanwhile, your company’s core product sits neglected in the backlog.

Fortunately, the landscape has radically shifted in 2026. There is a much better way to handle unstructured data. Modern, scalable AI document extraction APIs powered by semantic AI do not read pixels they understand structural language. No more templates. No more coordinate hunting. Just clean, validated JSON and shipping reliable code.

In this definitive engineering guide, we will break down exactly why legacy OCR architectures are failing, the hidden costs of maintaining them, and how migrating to AI document extraction APIs can instantly cut your development time by over 80%.

Table of Contents

- 1. The Hidden Cost of Template-Based OCR Systems

- 2. The Maintenance Tax: Why Linear Scaling Fails

- 3. Real-World Case Study: The 4-Month Fintech Nightmare

- 4. What Is Semantic AI Document Extraction?

- 5. Schema-First vs. Template-First Architecture

- 6. Understanding vs. Pattern Matching: Handling Failures

- 7. AI Document Extraction APIs vs. In-House OCR (Comparison)

- 8. Why CTOs Are Forcing the Shift to API-First

- 9. Real-World Migration: Legacy OCR to API in 3 Days

- 10. Decision Framework: When to Build vs. Buy in 2026

1. The Hidden Cost of Template-Based OCR Systems

Most engineering teams do not actively choose to build a legacy template OCR system they inherit it. Usually, an ambitious developer deployed Tesseract combined with OpenCV around 2019, wrapped it in a lightweight Flask service, and enthusiastically called it “v1”. By the time 2026 rolls around, that “lightweight” service has metastasized into a monolithic beast of 4,000 lines of Python that nobody on the current team wants to touch.

Why Rule-Based Systems Still Plague Legacy Stacks

The architectural trap of template OCR is entirely invisible until you are fully immersed in it. Teams quickly find themselves drowning in edge cases that modern AI document extraction APIs solve out of the box. The typical legacy nightmare includes:

- Vendor-Specific Coordinate Mappings: Hardcoded X and Y coordinates stored in fragile YAML files.

- DPI-Dependent Bounding Boxes: Logic that works perfectly on digital PDFs but breaks catastrophically on smartphone mobile scans.

- Rotated Image Handling: Requiring complex OpenCV affine transforms that bloat the codebase and eat up expensive GPU memory.

- Font-Dependent Detection: Optical recognition that was trained specifically on Arial but hallucinates wildly when vendors use Calibri or custom branding fonts.

The ultimate result? A brittle data pipeline that works flawlessly on Tuesday morning and fails completely by Tuesday afternoon simply because a supplier updated their PDF generation software.

2. The Maintenance Tax: Why Linear Scaling Fails

In software engineering, systems must scale logarithmically, not linearly. However, template OCR scales linearly in the worst possible way: with document variety. If your business processes invoices from 50 different vendors, you are actively maintaining 50 different regex patterns. Got 200 vendors? God help your DevOps team.

When you ignore AI document extraction APIs, the real cost breakdown of building OCR from scratch usually looks like this:

- Weeks 1-2: Build the initial OCR pipeline and celebrate the early wins with clean, digital PDFs.

- Months 2-3: Begin discovering the harsh reality of edge cases (scanned vs. digital PDFs, multi-page tables spanning across page breaks, nested line items).

- Months 4-6: Rage-debugging why row 47’s total parsed as “SI47,230.00” instead of “$1,230.00” due to poor image binarization.

- Ongoing Future: Weekly fire drills whenever a vendor changes their layouts or adds a new tax column.

Maintenance is not 20% of the work in an OCR pipeline. It is 80% of the work. And the technical debt compounds daily.

3. Real-World Case Study: The 4-Month Fintech Nightmare

To quantify this pain, consider a Series B fintech company we recently spoke with (identity protected for NDA purposes, but they process roughly $400M in Accounts Payable annually). They made the fateful decision to build their document parsing engine in-house rather than leveraging AI document extraction APIs.

They dedicated 16 engineering weeks to building an in-house invoice extraction service. Their incredibly heavy tech stack included:

- Tesseract for base optical character recognition.

- OpenCV for deskewing and image normalization.

- Custom Computer Vision (CV) models trained specifically for table detection.

- Over 2,400 lines of complex regex just for field extraction.

- A dedicated Redis queue just to handle retry logic when the service crashed.

After four months of dedicated engineering time and hundreds of thousands of dollars in payroll, what was their accuracy on unseen vendor formats? A dismal 62%. Their accuracy on slightly rotated scans? 41%.

They had effectively created a dedicated “OCR engineer” role whose entire job was simply updating X/Y coordinates whenever vendors refreshed their PDF templates. Today, that role no longer exists at their company because they finally deleted the code and switched to an API.

4. What Is Semantic AI Document Extraction?

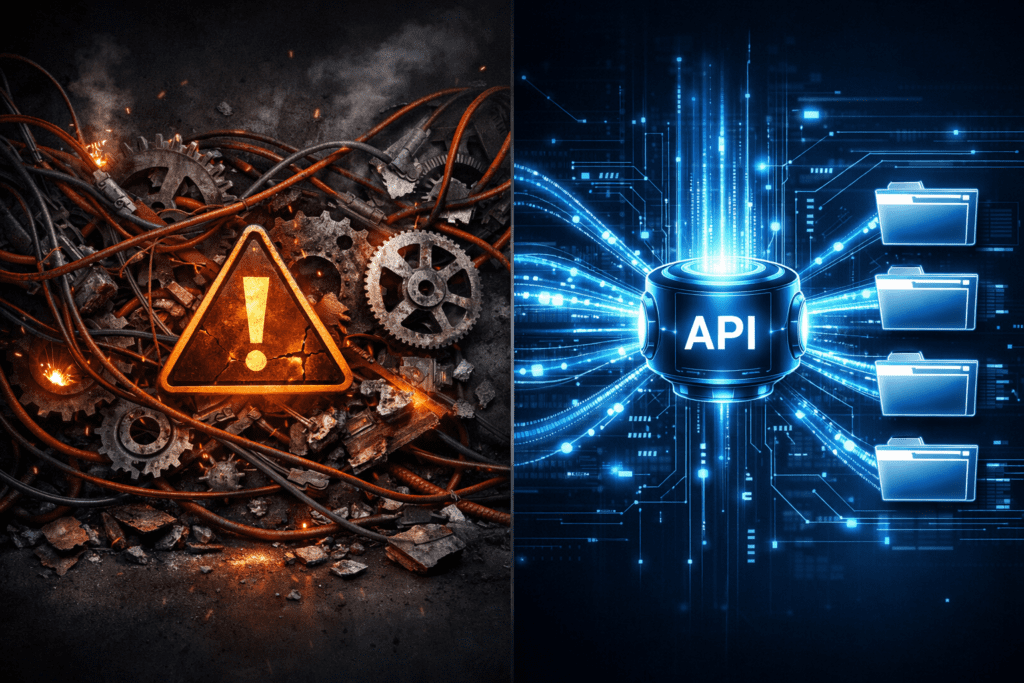

To understand why AI document extraction APIs are rapidly replacing legacy systems, we must look at the fundamental paradigm shift in how machines process information.

How LLM-Powered Extraction Actually Works (No Templates)

Traditional OCR asks a geometric question: “What text characters exist at pixel coordinates (x1, y1) to (x2, y2)?”

Semantic AI, driven by Large Language Models (LLMs), asks a contextual question: “Where is the invoice number, regardless of where it appears on the page?”

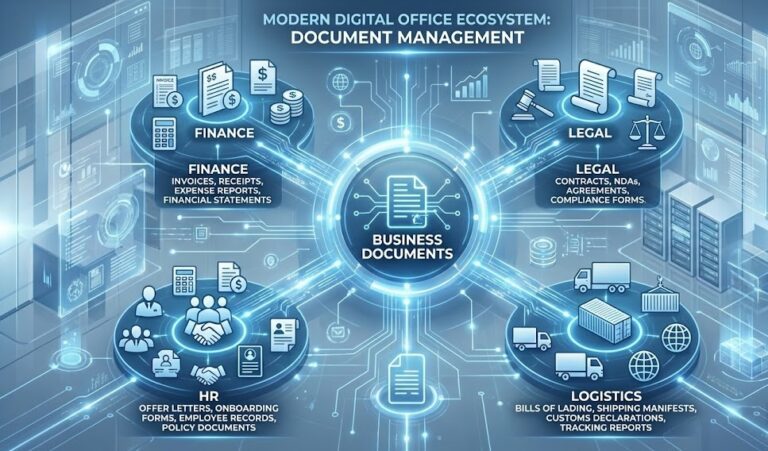

High-end AI document extraction APIs treat business documents like structured language, not like coordinate grids. The model reads the entire document context simultaneously absorbing the headers, footers, complex nested tables, and fine print and extracts meaning based on semantic understanding rather than geometric position.

The Key Differentiators of Semantic Engines

- Zero-Shot Field Detection: The AI model recognizes the concept of a “Total Amount” whether the document labels it as “Total:”, “Grand Total”, “Amount Due”, or even in a different language like “Amount Due“.

- Dynamic Table Parsing: Complex line items are extracted by their logical structure and relational context, not by hardcoded row coordinates.

- Multi-Page Coherence: Context automatically carries across page breaks, meaning a table that spans three pages is seamlessly stitched together into one JSON array.

- Built-in Confidence Scoring: Per-field confidence metrics allow you to programmatically flag uncertain extractions for human review, ensuring pristine data quality.

Still struggling with fragile OCR templates? Watch how our AI extracts perfect JSON in milliseconds without a single bounding box 👇

5. Schema-First vs. Template-First Architecture

The transition to AI document extraction APIs represents a fundamental architectural shift. Template OCR forces your engineering team into the document’s structure. Semantic AI, conversely, allows you to define the strict structure your database needs, and forces the document to comply.

The Old Way: Fragile Template OCR

In the legacy approach, developers must manually hunt for magic numbers and hope their regex holds up.

# Deprecated: Manual coordinate extraction

def extract_total(pdf_path):

img = pdf_to_image(pdf_path)

# Magic numbers discovered via trial and error

crop = img[850:920, 1200:1650]

text = pytesseract.image_to_string(crop)

# Hope this regex holds

match = re.search(r'\$\d{1,3}(?:,\d{3})*\.\d{2}', text)

return match.group(0) if match else None

The New Way: Schema-First API Integration

With modern AI document extraction APIs, you simply pass a JSON schema to the endpoint. You tell the API what you want, and the LLM figures out how to get it.

{

"schema": {

"invoice_number": {

"type": "string",

"description": "Unique invoice identifier"

},

"total_amount": {

"type": "number",

"description": "Final amount due"

},

"line_items": {

"type": "array",

"items": {

"sku": "string",

"description": "string",

"quantity": "number",

"unit_price": "number"

}

}

}

}

The API processes the document and returns clean, strictly validated JSON. There is absolutely no coordinate math required. There is no regex archaeology to perform when a new vendor is onboarded.

For more detailed insights on how these schemas define the future of business operations, review our guide on step-by-step data extraction workflows.

6. Understanding vs. Pattern-Matching: Why AI Doesn’t Break

Template OCR fails silently. A slightly shifted column, a rotated scan from a mobile device, a new font choice—and your legacy extraction pipeline silently returns garbage data that propagates downstream into your ERP database.

Conversely, semantic AI fails visibly. When the underlying LLM model is uncertain about a specific field, premium AI document extraction APIs tell you exactly where the uncertainty lies:

{

"total_amount": {

"value": 12450.00,

"confidence": 0.94,

"position": "bottom_right"

},

"line_items": {

"value": [...],

"confidence": 0.89,

"alternatives": [

{"interpretation": "three_line_items", "confidence": 0.89},

{"interpretation": "two_line_items_with_subtotal", "confidence": 0.08}

]

}

}

This paradigm shift changes everything. Instead of corrupted data hitting your database, you programmatically route low-confidence extractions to a manual review queue. Your accuracy on autopilot goes up immediately. Your midnight SRE fire drills go down.

The model does not just extract characters it actually comprehends the document. Complex tables are parsed as relational tables, not as rigid pixel grids. Line items are associated with their corresponding SKUs by reading the logical row context, not by measuring vertical pixel spacing. Currency is perfectly normalized because the model understands that “$1,234.56” and “USD 1.234,56” represent the exact same financial value. The bottom line is simple: you stop fighting document layouts and finally start building product features.

7. AI Document Extraction APIs vs. In-House OCR: A Technical Comparison

Let’s get brutally practical. You are staring at a Jira ticket that reads: “Add invoice processing support for Vendor X.” Should your engineering team:

A) Spend 3 weeks building another template?

B) Ship a single API call by EOD?

The table below compares actual engineering metrics when evaluating AI document extraction APIs vs. In-House OCR architectures.

| Metric | Legacy OCR Pipeline (In‑House) | AI Document Extraction APIs | Why It Matters |

|---|---|---|---|

| Dev effort per new vendor | 40–120 hours (regex + testing) | 0.5–2 hours (JSON schema updates) | Velocity. Every hour spent on OCR is an hour not spent on core product features. |

| Infrastructure overhead | 2–8 dedicated GPU instances. Monthly cost: $300–$1,200+. | Zero. No VMs, no container sprawl, no Redis queues for retries. | Opex. Your CFO cares about cloud bills. Your SRE cares about pager load. |

| Accuracy on unseen docs | 50–70% (after manual tuning). Degrades heavily with format complexity. | 85–98% (out‑of‑the‑box). Improves automatically with model updates. | Data quality downstream. Low accuracy equals garbage data in your ERP. |

| Maintenance burden | Weekly fire drills, regex archaeology, bounding‑box drift. | Zero. The API provider handles all model updates, uptime, and scaling. | Team morale. Burnout from OCR firefighting is a highly documented reality. |

| Tech debt | Extremely High. Custom OCR code becomes legacy nobody wants to touch. | None. The API is a clean external dependency with clear enterprise SLAs. | Long-term health. Who wants to inherit 5,000 lines of Tesseract config? |

Table: Technical performance comparison between legacy OCR and modern API-first architectures.

8. Why CTOs Are Forcing the Shift to API-First

CTOs are not pushing for AI document extraction APIs because they are lazy. They are buying them because they are highly strategic. The fastest way to win in a competitive SaaS market is to out‑execute the competition. That means focusing expensive engineering time on competitive moats, not on building commodity plumbing.

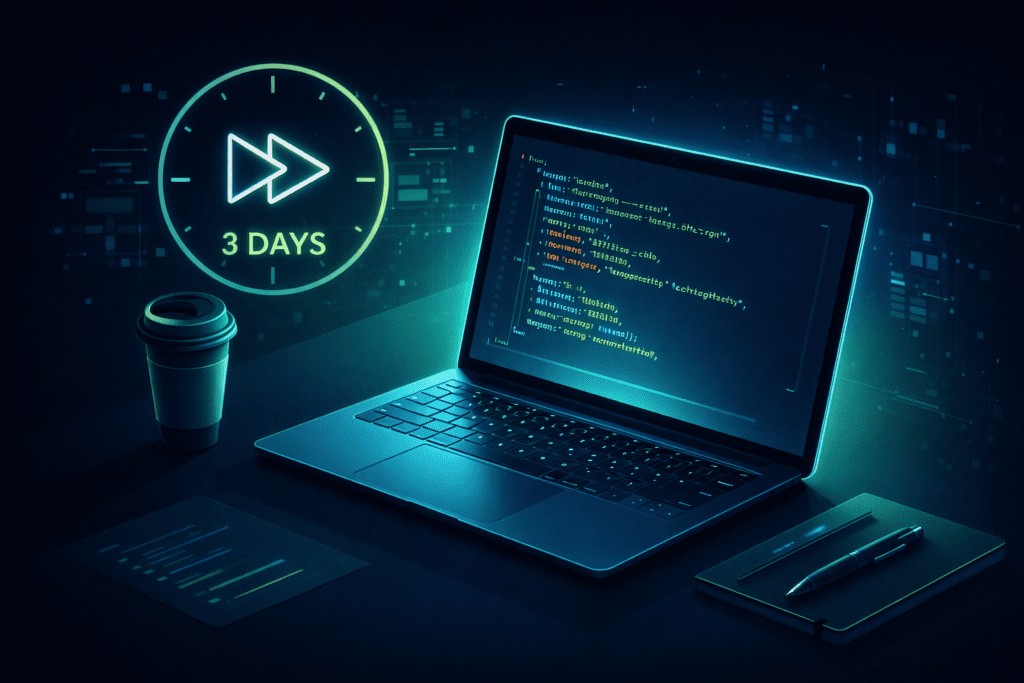

Developer Velocity: From Months to Days

Template OCR doesn’t just cost money it costs opportunity. Every engineer-week spent debugging regex is a core feature not shipped to your customers.

- Legacy OCR Timeline: 3 engineers × 2 months = ~960 hours.

- API-First Timeline: 1 engineer × 3 days = ~24 hours.

The velocity multiplier isn’t 2× it is an incredible 40×. That is the literal difference between shipping a product in Q2 versus shipping it in Q4.

Infrastructure Costs: Managed vs Self-Hosted Reality

Self‑hosted OCR feels cheap until you actually run the numbers. Hidden costs most teams miss include:

- GPU Instances: Tesseract and OpenCV require CUDA for acceptable performance, costing $1.50–$4.50/hour.

- Memory Leaks: OpenCV and Python garbage collection isn’t pretty, often requiring container restarts every 48 hours.

- Scaling Ops: During peak load, you must spin up more GPU VMs, meaning you are paying for idle capacity 80% of the time.

Integrating AI document extraction APIs offers predictable pricing: you pay $X per 1,000 pages. No GPUs. No containers. No SRE pagers going off at 3 AM because Tesseract ran out of memory (OOM).

Calculate Your True OCR Engineering Costs

Stop guessing. Use our interactive calculator to see exactly how much your infrastructure and regex maintenance are burning each month.

Run the Numbers →9. Real-World Migration: Legacy OCR to API in 3 Days

Take a rapidly scaling logistics platform that processed 80,000 Bills of Lading (BOLs) monthly. Their legacy stack relied on Tesseract 4, OpenCV, and 2,100 lines of complex Python regex. Accuracy on handwritten BOLs was a miserable 27%, and their monthly cloud bill was $3,800 just for GPU instances. They had one engineer whose entire job was OCR maintenance they called him the “PDF whisperer.” He quit.

They migrated to robust AI document extraction APIs in exactly three days.

- Day 1: Replace the OCR microservice with a single POST request.

- Day 2: Build the webhook endpoint for async processing.

- Day 3: Parallel run—route 50% of traffic to the new API and compare results.

import os

import json

import requests

API_KEY = os.getenv("PARSERDATA_API_KEY")

schema = {

"invoice_number": "string",

"total_amount": "number",

"line_items": "table",

}

with open("invoice.pdf", "rb") as f:

response = requests.post(

"https://api.parserdata.com/v1/extract",

headers={"X-API-Key": API_KEY},

files={"file": f},

data={"schema": json.dumps(schema)},

timeout=300,

)

data = response.json()

*That’s the core integration. Compare it to a brittle OCR microservice with custom preprocessing, regex, and layout rules.

The outcomes within the first 30 days were staggering: accuracy hit 95.3% on unseen formats, latency dropped from 32 seconds to 4.2 seconds, and the monthly infrastructure bill fell to $1,200. They added 12 new logistics partners without writing a single new line of extraction code. The lesson? Sometimes the best code is the code you delete.

10. Decision Framework: When to Build vs. Buy in 2026

Not all OCR needs to be outsourced to an API. Here is an honest architectural framework to help you make the right decision:

Build In-House If:

- You process only 1-2 highly fixed, unchanging formats.

- Your infrastructure requires a strict, air-gapped environment with zero internet access.

- Your document volume is micro-scale (<1,000 pages/month).

- Building an OCR or PDF editor is your actual core product offering.

Buy (Use AI Document Extraction APIs) If:

- You process more than 5 document formats or have unpredictable vendor layouts.

- Your required time-to-market is less than 3 months.

- High accuracy is business-critical (95%+ out-of-the-box).

- Your engineering team is lean and you cannot dedicate headcount to OCR maintenance.

11. How to Evaluate Document Extraction APIs

Not all AI document extraction APIs are created equal. Here is a checklist for CTOs and Lead Developers when evaluating extraction engines:

- Accuracy Benchmarks: Test on your real, messy documents—not the vendor’s curated demo samples.

- Schema Depth: Does the API support complex nested JSON arrays (e.g., extracting multiple sub-items within an invoice line item)?

- Latency and SLA: Look at p95 and p99 latency percentiles, not just the average. An SLA uptime of 99.9% vs. 99.99% is the difference of 8.7 hours of downtime per year.

- Compliance: Look for SOC2 Type II certification, GDPR-ready data retention policies, and encryption standards.

💡 Internal Tip: If you are currently evaluating vendors or deciding on architecture, we highly recommend reading our detailed Legacy OCR vs API comparison to see how modern endpoints stack up against legacy pipelines. For a deep dive into the code and architecture changes required, check out our complete legacy OCR migration guide.

Conclusion: The Shift Is Already Happening

Template-based OCR isn’t just outdated—it is a massive technical liability. Every sprint your team spends fixing coordinate drift and patching regex is a sprint they aren’t building your core product.

Relying on modern AI document extraction APIs is no longer just the “future of work”. It is the current operational baseline for top-tier engineering organizations. The difference in developer velocity translates directly into an undeniable competitive advantage. The question isn’t “Should we switch?” anymore. It’s “How fast can we migrate before the next vendor breaks our pipeline?”

Ready to stop building OCR pipelines?

Get your API key in under 2 minutes and start testing.

# Get your API key

curl -X POST https://api.parserdata.com/v1/extract \

-H "X-API-Key: $API_KEY" \

-F 'schema={"invoice_number":"string","total_amount":"number","line_items":"table"}' \

-F "file=@invoice.pdf"

Ready to stop building OCR pipelines?

Start Free Trial

Get your API key instantly. No credit card required.

Read API Docs

OpenAPI spec + SDKs for Python, Node.js and Go.

Watch Product Demo

See how ParserData extracts structured data from complex documents in seconds.

Frequently Asked Questions

Why is template-based OCR considered outdated?

Template OCR relies heavily on strict pixel coordinates and rigid regular expressions. When a document’s layout changes slightly—such as a shifted column, a rotated scan, or a new font the extraction breaks silently. This forces development teams into continuous, expensive maintenance cycles.

How do AI document extraction APIs differ from legacy OCR?

Instead of merely identifying characters within a geometric box, AI document extraction APIs use Large Language Models (LLMs) to understand the semantic context of the document. They can locate and extract fields like “Total Amount” or “Invoice Number” regardless of where they are positioned on the page.

Can AI document extraction APIs handle complex nested tables?

Yes. Modern semantic AI understands hierarchical relationships and data structures natively. It can reconstruct multi-page, complex nested line-item tables into perfectly formatted JSON arrays without requiring developers to draw manual bounding boxes or write custom parsing logic.

What is the typical ROI when switching to an API-first extraction model?

Engineering teams typically observe an 80% reduction in development time and maintenance overhead. Instead of spending months building custom OCR pipelines with OpenCV and Tesseract, developers can integrate AI document extraction APIs via a simple JSON schema in a matter of days.

How do AI extraction APIs handle compliance and data security?

Enterprise-grade AI document extraction APIs are typically SOC2 Type II certified and fully GDPR compliant. They often feature zero-data-retention modes, ensuring that sensitive financial, legal, or medical documents are processed in-memory and immediately discarded, significantly reducing compliance risk.

Recommended Reading

- The Hidden Manual Invoice Processing Cost in 2026: A CFO’s Guide

- The Role of API in Automation: The Nervous System of Business

- What Is Excel Automation? The Ultimate Explainer Guide

- How to Extract Data from Documents: The Definitive Guide

Disclaimer: All comparisons in this article are based on publicly available information and our own product research as of the date of publication. Features, pricing, and capabilities may change over time.